HALO

Harmonizing Asymmetric Multi-core Architectures with Linked communication for Optimal design

A model-driven workflow for describing heterogeneous systems, validating communication intent across domains, and generating portable and platform-specific implementation artifacts from one unified model.

Why HALO Exists

Linux applications, RTOS tasks, bare-metal firmware, and accelerator logic all need to exchange data reliably.

Architecture diagrams, memory maps, code, and protocol assumptions often live in different places and drift apart.

Architecture, interfaces, and hardware/mapping are modeled separately and then composed into one system view.

The HALO Concept

System Architecture

Describes Components, platforms, functions, and logical connections between endpoints.

Data Types

Provides a library of pre-defined data structures used by interfaces. Definitions can be reused across multiple interfaces.

Interfaces

IDL defines the interfaces between components, focusing on the data being exchanged with access and integrity rules.

Hardware Mapping

HML defines the profile of how components exchange data, focusing on the data placement, synchronization and transfer behaviour. HML profile is mapped uniquely to the ”communication” type defined in ADL.

Hardware Mapping Library

Contains various descriptions of profiles which can be imported into HML.

HALO Domain Specific Language (DSL) Inputs

MySystem {

components {

Core1 : Component {

Type: Application,

Platform: Linux,

Function: GeneralProcessing

}

Core2 : Component {

Type: Application,

Platform: FreeRTOS,

Function: RealTimeProcessing

}

}

connections {

connection LinuxToRTOS {

from Core1 to Core2

interface: TstIf_1

profile: sharedMemoryProfile1

}

}

}include *.iddl

interface TstIf_1 {

access {

read { SharedData SharedData2 }

write { SharedData SharedData2 }

}

integrity {

crc16 { SharedData2 }

}

}dataStructures {

SharedData {

integer cnt

integer dataId

byte dataPayload[1024] = 0

}

SharedData2 {

integer dataPayload[1024]

}

}#include *.hmml

SharedMemory sharedMemoryProfile1 {

memSize: 4MB

baseAddress: 0xA0000000

}

Profiles {

SharedMemory {

memSize: 64MB

baseAddress: 0x80000000

cacheable: True

policy: WriteBack

cacheLine: 64B

coherence: Software

syncType: AcquireRelease

permissions: RW

priority: High

syncBlocking: True

syncPrimitive: mutex

}

}

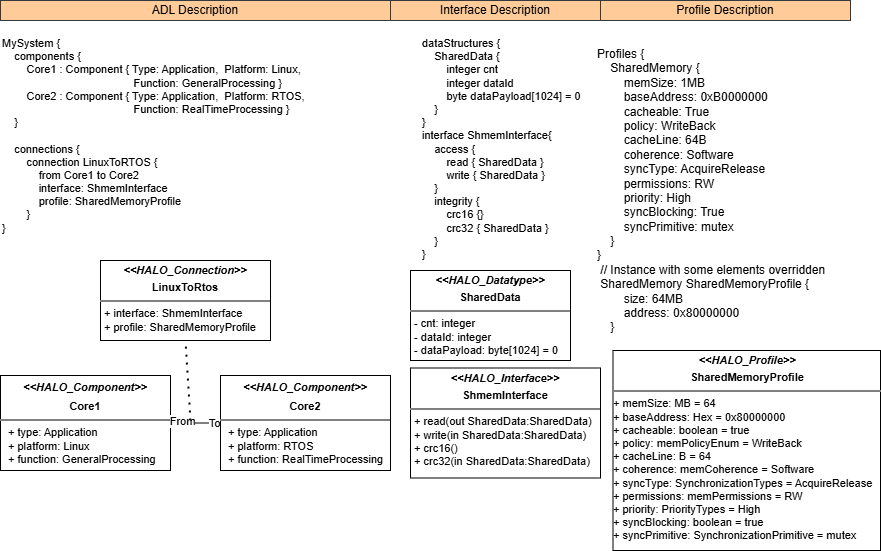

HALO UML Inputs

HALO components represented as UML classes with component stereotypes and attributes such as type, platform, and function.

Interfaces are UML interface-like classes with operations for read, write, and integrity rules, while referenced structs become data-type classes.

Profiles are stereotyped UML classes, and connections are represented as association classes linking source and destination components.

If DSL Inputs are the "source code" of HALO, then UML artifacts will be generated for analysis and visualization in UML tools.

- architecture.puml - PlantUML model of the architecture

- interfaces/*.puml - PlantUML views of the interfaces

- profiles/*.puml - PlantUML views of the profiles

- halo_model.uml - UML model of the HALO system (importable into UML tools)

- halo_profile.xmi - XMI representation of the HALO profiles (for UML tools)

HALO Inputs Overview

HALO supports DSL and UML inputs

Author in HADL

Create ADL, IDL, IDDL, HMML, and HML files in the textual HALO workflow.

Compose

Build the unified HALO model.

Export

Write unified model in JSON and XMI format with PlantUML views for external tools.

UML Tools

Users can import a HALO model as XMI, design and analyze it in UML tools, and export it back in XMI format.

UML XMI Import

HALO can import UML XMI and export DSL descriptions, supporting reproducible round-trips.

HADL - Hardware Architecture Description Language (ADL / IDL / HML)

NOTE: Users can create UML diagrams and export them as XMI files for HALO import without having to write them in the DSL first.

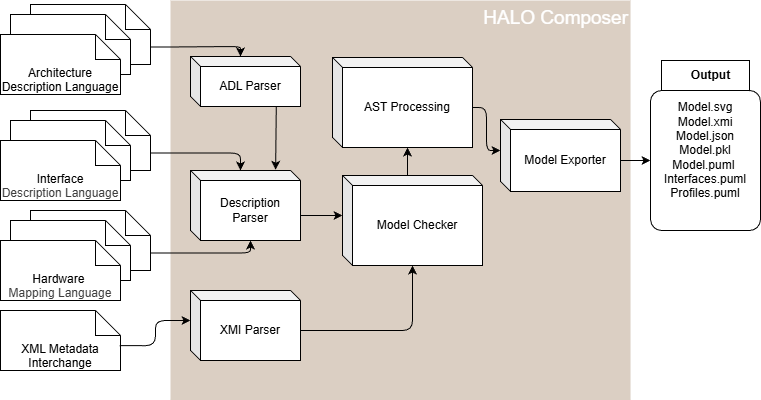

HALO Composer Overview

End-to-End Flow

Author Models

Write DSL files or import a UML XMI model.

Parse

Composer parses the input files into structured IR models.

Compose

The composer resolves cross-references and builds one unified HALO model.

Validate

Check interfaces, profiles, connection semantics, and basic referential integrity.

Emit Artifacts

Write JSON, Pickle, DOT, SVG, PlantUML, UML XMI, and DSL artifacts.

Analyze

Generate a summary report of components, connections, warnings, and errors.

DSL inputs:

halo compose --hadls-root "path to hadls" --output-dir "path to output directory" --include-stdlib-profiles

UML/XMI input:

halo compose --from-xmi "path to uml file" --output-dir "path to output directory"IR - Intermediate Representation

Argument --include-stdlib-profiles is important to include the standard library of profiles built-in HALO Composer which user can reference, for in example:

- SharedMemory profiles

- BlackBoard profiles

- EventChannel profiles

The Unified Model

For humans and tools

One inspectable and tool importable JSON view of the full communication architecture.

For tools

1. Pickle file with structured dictionaries for future tooling.

2. UML XMI file for integration with UML tools.

For traceability

Every generated platform or protocol artifact can be traced back to components, interfaces, and profiles.

{

"name": "LinuxToRTOS",

"from_component": "Core1",

"to_component": "Core2",

"interface": "TstIf_1",

"profile": "sharedMemoryProfile1"

}

In practice, the model bundles components, connections, interfaces, profiles, dataTypes, and value-domain metadata in one place.

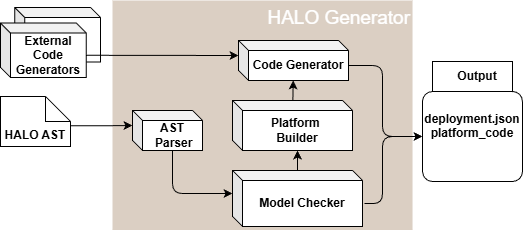

HALO Code Generator

Built-in plugin that generates HALO API for each target platform which contains the core functionality and abstractions.

Independent plugins that generates platform-specific code for the target platform, including initialization and integration files.

Independent plugins that generates communication protocol-specific code based on the profiles used in the ADL communication description.

The HALO Framework allows user to generate platform generator for the target platform, including initialization and integration files. User can continue to develop and customize these generators based on their specific requirements.

Each platform generator is designed to work with a specific target platform, ensuring compatibility and seamless integration.

Each protocol generator covers a specific set of profiles. For example, the SharedMemory generator handles profiles that involve shared memory communication patterns.

The HALO Framework allows user to generate protocol generators as plugins, which can be developed and installed independently from the core framework. This design allows for extensibility and customization of the code generation process to support a wide range of communication protocols and profiles.

HALO Is Designed as a Plugin Ecosystem

CLI command "halo create-generator" can scaffold new platform or protocol generator packages with config, render logic, and Jinja2 templates.

Generators are auto-discovered through Python entry points for platforms and for protocols.

Scaffolding Platform and Protocol Generators

CLI command "halo create-generator" runs an interactive questionnaire or accepts non-interactive flags, then routes to the scaffold factory to create either a platform or protocol generator package.

Platform scaffolds creates an installable Python package with python render logic and platform Jinja2 templates that add startup, integration, and target-specific support files.

Protocol scaffolds creates an installable Python package with python render logic and platform Jinja2 templates for each supported platform, so one protocol can target multiple environments.

- A Python package with entry points so HALO can auto-discover the generator later.

- Python render.py as the main implementation hook where users prepare data and decide which files to generate.

- Python config.py containing generator metadata such as name, module path, output directory, and supported platforms.

- A templates/directory that becomes the main place to customize how the code will be generated.

- Edit render.py to add support for new features or modify existing behavior.

- Edit or add Jinja2 templates under src/<module>/templates/ to change emitted headers, sources, and config/build files.

- Install generator package with pip install -e . so the generator is installed and discovered.

Interactive mode: halo create-generator

Silent mode: halo create-generator --generator-type platform --no-interactive --name my_platform --description "My Platform Generator" --author "Your Name" --email "you@example.com" --platform my_platform

Silent mode: halo create-generator --generator-type protocol --no-interactive --name my_protocol --description "My Protocol Generator" --author "Your Name" --email "you@example.com" --platform my_protocol --supported-platforms my_platformHALO Generator Overview

HALO Code Generation with Jinja2

Official Jinja2 docs: jinja.palletsprojects.com/en/stable/

The AST Parser loads unified model in PKL format, runs the analyzer, and selects all platforms from the unified model unless the user filters them explicitly through command-line arguments.

Platform builder builds a platform-scoped model containing only relevant components, connections, interfaces, profiles, structs, and communication interfaces.

HALO renders first internal, then platform-specific and lastly protocol-specific code. Jinja2 templates are what turns the unified model data into actual C/C++-style output code.

- Keep templates declarative and move complex computations into render.py and provide to jinja environment the necessary context.

- Use Jinja2 when you want to change output structure, naming, boilerplate, or file layout without rewriting the whole generator.

- Add new templates when a platform or protocol needs extra files instead of forcing everything into one render step.

- The unified model decides what needs to be emitted; the Jinja2 generator decides how it should look on a given target.

- Templates should contain preservation blocks which are used to not override user modifications done to the generated files. `/* HALO USER CODE BEGIN: ... */` and `/* HALO USER CODE END: ... */`

- This means users can safely place handwritten code, for in example: includes, init logic, ISR hooks, protocol extras, and declarations inside those markers without losing them on the next generate step or if generator is updated.

/* HALO USER CODE BEGIN: halo_disable_interrupts */

// user implementation stays here across regeneration

/* HALO USER CODE END: halo_disable_interrupts */Existing Application Projects

User runs halo compose and halo generate during its normal code-generation step.

HALO emits a generated package with an include/ tree and a src/ tree. Those generated headers and sources are then added to the existing application build system.

The existing application includes generated headers like halo_api.h, calls generated platform init functions, and uses generated typed APIs such as halo_send_*() and halo_recv_*().

- Generated common HALO files such as halo_api.h, halo_cfg.h, halo_structs.h, halo_api.c, and halo_channels.c.

- Generated platform files such as halo_platform_init.h and halo_platform_init.c.

- Generated protocol files placed alongside the platform output in the same HALO layer.

- Optional custom platform/protocol generator packages installed prior to running the generators.

- Business logic, control loops, scheduling, services, and application-specific behavior.

- Hardware handles, RTOS objects, external buffers, interrupts, and other target-specific integration details.

- Build system wiring that compiles generated src/ files and exports generated include/ directories.

- Custom code placed inside preserved `HALO USER CODE BEGIN/END` blocks.

Representative integration flow

# 1. Generate HALO layer for the project

halo compose --hadls-root "./hadls" --output-dir "./halo_out" --include-stdlib-profiles

halo generate --composer-output-dir "./halo_out" --output-dir "./generated"

# 2. Add HALO layer to the existing application build

- add ./generated/codegen/<target>/include to include paths

- add ./generated/codegen/<target>/src/*.c to sources

# 3. Use HALO from existing application code

#include "halo_api.h"

halo_core1_init_BareMetal();

halo_send_BlackBoardData_Core1ToCore2(&tx_data);Thank You

Thank you for exploring HALO's model-driven workflow.